Ever wonder what INFRASTRUCTURE really means for politicians?

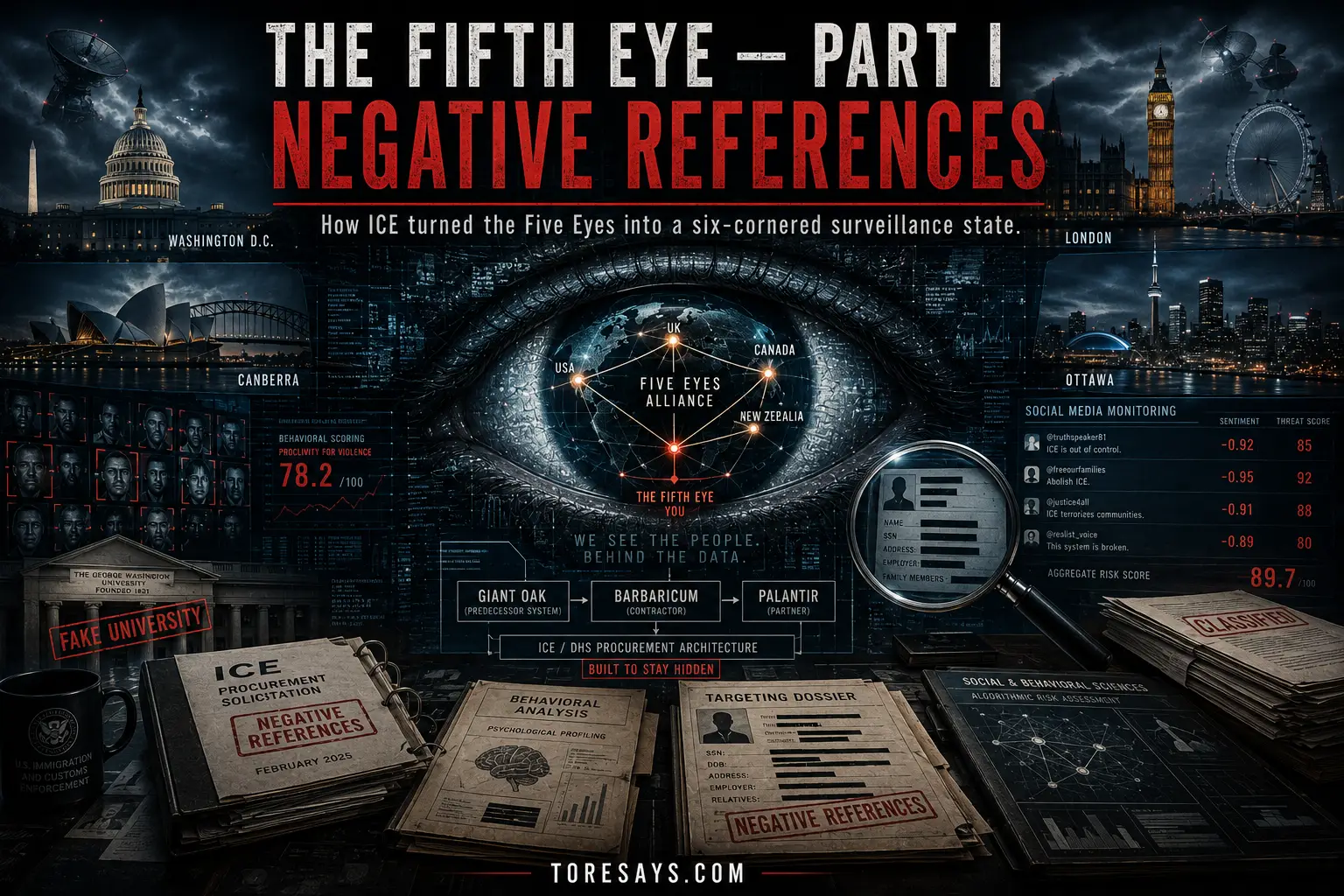

Integrating artificial intelligence (AI) into political processes has not just brought about changes; it has revolutionized how campaigns are run, policies are analyzed, and public opinions are monitored. While these advancements offer numerous benefits to the government, they also contribute to the complex and synchronized nature of online discourse, providing more control to AI and potentially undermining free will and choice. I chose to write about this topic to help many understand the transformative role of AI in politics, the concerns arising from its use, and the implications for online communication. Additionally, this insight sheds light on the “infrastructure” parroted by politicians globally.

Politicians and strategists are leveraging the latest advancements in political campaigning, with AI at the forefront of this revolution. One of the critical subjects is predictive analytics, often humorously referred to as “time travel” due to its precision.

Despite its decades-long existence, AI is often seen as an innovation because we constantly uncover its impacts on our daily lives, careers, and self-governance systems. Initially, AI’s development was deeply rooted in computational linguistics, with the aim of assisting machines in understanding human language. This foundational aspect of AI empowers machines to bridge the gap between human communication and technology, showcasing the dynamic nature of this technology.

Today, AI has become integral to government operations. The federal government utilizes AI across various sectors, including healthcare, transportation, environmental management, and benefits delivery, to enhance public services. AI supports public policy objectives in emergency services, health, and welfare and facilitates better interaction between the public and government entities.

CAMPAIGNS BESPOKE TO TARGET DEMOGRAPHIC

By analyzing vast datasets, AI creates messages tailored to different target demographics, increasing engagement and effectiveness. However, a study by the Pew Research Center highlighted that this personalization often results in the creation of echo chambers, where individuals are only exposed to information that reinforces their preexisting beliefs or promotes specific ones. This raises concerns about the broader implications for democratic discourse, especially on social media.

Is Fact-Checking Feasible?

The benefits and pitfalls of automated fact-checking are becoming increasingly evident as AI-driven tools are postured to play a crucial role in combating misinformation. These tools have been instrumental in allegedly verifying the accuracy of statements made by politicians, significantly enhancing the integrity of political discourse. According to the Poynter Institute, AI fact-checking systems have effectively flagged false information, thereby curbing the spread of misleading narratives. The question then is, who decides what is true? Is that another AI program, or is it the truth the PROGRAMMERS want to ensure is solidified?

Such systems do have limitations, and the primary concern is the most obvious. The programmers are human and have perspectives; therefore, potential bias is embedded within the data and algorithms that drive these tools. Bias can stem from the datasets used to train AI models, which might not represent diverse viewpoints or reflect existing prejudices. This can lead to uneven fact-checking outcomes, where some types of misinformation are more rigorously scrutinized than others. The Guardian also reports that automated fact-checking tools sometimes struggle with nuanced statements, where context and subtlety are crucial for accurate evaluation. This is why Elon Musk has introduced “community notes” on X in order to use humans, not AI, to “fact-check”.

Such automated systems can process vast amounts of data quickly; they lack the human judgment necessary to interpret complex statements fully. This limitation underscores the need for a hybrid approach that combines AI efficiency with human oversight. A study by the Journal of Artificial Intelligence Research highlights the importance of continuous human intervention to validate and refine AI findings, ensuring a balanced and comprehensive fact-checking process.

GOVERNMENT OPERATIONS, aka Infrastructure

Government operations are becoming increasingly streamlined as agencies adopt AI technologies to reduce manual processes and harvest citizen engagement to enhance the AI’s learning process and algorithms. AI systems have revolutionized government functions, leading to greater efficiency and automation.

Companies like McKinsey & Company, which have an unspoken goal of automated government, subtly promote the idea of an AI government by highlighting several case studies where AI significantly improved service delivery and operational effectiveness within government agencies. For instance, AI-powered chatbots handle routine inquiries, freeing up human staff to focus on more complex issues, and machine learning algorithms optimize resource allocation and fraud detection.

It’s evident for most HUMANS that AI-powered chatbots don’t always get it right. Who do you think runs the IRS, humans or AI? I propose you consider this statement.

However, despite these so-called advancements, parroted by the entities that seek to gain more real estate in the battle for control – they have acknowledged some cautionary notes regarding integrating AI into government operations. One significant concern is the potential lack of human empathy in AI-driven decisions. Automated systems, while efficient, can sometimes fail to consider the nuanced, personal aspects of human interactions that are crucial in public service. This issue was underscored in a comprehensive review by the Brookings Institution, which pointed out that AI systems could inadvertently perpetuate biases present in their training data, leading to unfair outcomes in areas such as social services and law enforcement.

Do you think AI is novel? St. George’s Hospital Medical School in London used an AI hiring algorithm during the 1980s. This system was found to discriminate against women and people with non-European names, leading to its removal from use. This case underscores the longstanding issues of bias in AI systems, dating back to the early days of artificial intelligence development.

The World Economic Forum emphasizes that integrating AI into government operations should be accompanied by robust frameworks to address these ethical concerns and ensure transparency. However, how can any robust ethical framework exist when the source of the issue is the programmer? If the programmer has a different world view (as all do – it’s personalized to each one’s experiences) how can it be “fair”. One man’s ethics is another one’s nightmare. Some people perceive people of another class as “useless” or “parasites” due to their intellect, socio-economic backgrounds, and even superficial things like race and sex. There is solid evidence of such concerns well documented in 4 decades-long studies of the “looking glass.” Every outcome, every prediction, and every calculation was determined from the operator’s perspective. Objectivity doesn’t really exist (one can argue empathy provides objectivity), just like free will has been non-existent in this era of information war for almost a century.

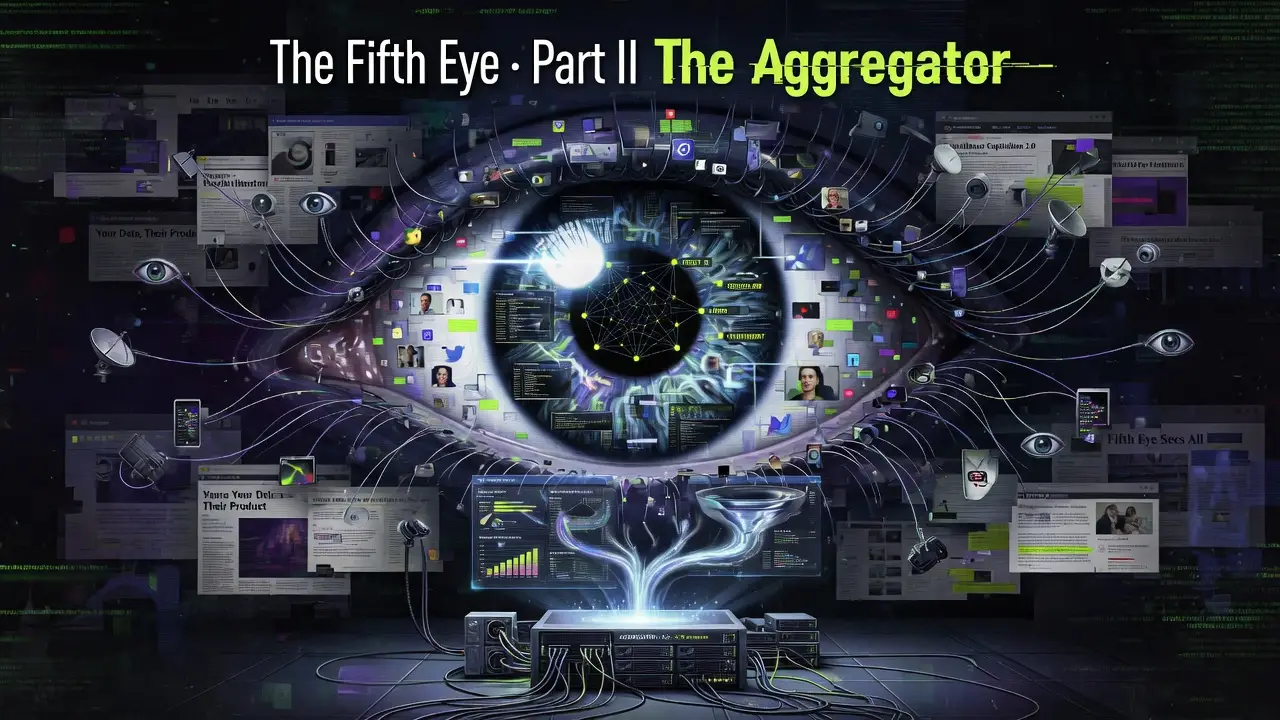

A wide range of dynamic AI applications allow for identifying emerging issues by monitoring social media platforms and news outlets. For example, Grok, an AI created by X, utilizes all of X’s social media posts. A simple example of this is shown in the video below.

Evidently, this capability enables politicians to stay ahead by proactively addressing public concerns and keeping their campaigning overhead down. Why go around giving speeches to all your constituents when AI can do all the work for you? However, as emphasized in an article by Harvard Business Review, this focus on trending issues can sometimes lead to the neglect of less visible but equally important concerns. The Harvard Business Review highlights the need for a balanced approach to ensure that critical yet less prominent issues are not overlooked.

Enhanced policy analysis has dramatically benefited from AI, which provides policymakers with comprehensive insights by analyzing public opinions, legislative documents, and research papers. This data-driven approach ensures decisions are based on the most current information available while highlighting the need for a balanced approach incorporating human judgment.

Do you still think the bills passed with thousands of pages of caveats, hidden legislation, and intricate loopholes are written by humans?

Even the RAND Corporation cautions that over-reliance on AI could overlook nuanced perspectives essential for effective governance. AI’s capacity to aggregate vast data misses the subtle, context-dependent factors crucial for balanced policy decisions. Do your selected politicians care? I say SELECTED because AI is involved with that, too.

RELATED: The Department of Defense Posture for Artificial Intelligence (2019)

CAMPAIGN SCIENCE

Public opinion monitoring has emerged as a crucial area where AI proves invaluable. Sentiment analysis tools scan media, social networks, and forums to gauge public opinion on various policies, providing real-time insights. This feedback mechanism allows politicians to adjust their strategies accordingly, enhancing their responsiveness to public needs and concerns.

AI tools are not just about analyzing vast amounts of text data. They have the potential to understand the public’s mood, detect trends, and even predict electoral outcomes. A study by the Pew Research Center showcased this power, demonstrating how sentiment analysis can track public opinion shifts and forecast election results based on social media activity.

However, significant challenges are associated with relying solely on AI for public opinion monitoring. As reported by The Atlantic, these tools can create feedback loops that amplify certain opinions, potentially skewing the representation of public sentiment. We know this as propaganda. AI partaking in amplifying propaganda is where we are today. This amplification occurs because AI systems often prioritize more frequently mentioned or more emotionally charged opinions, which can distort the overall picture of public opinion. This phenomenon can lead to policymakers overemphasizing specific issues while neglecting others that might be equally important but less prominently featured in social media discussions.

Many argue that to minimize these risks, it’s essential to combine AI-driven sentiment analysis with traditional methods of public opinion research, such as surveys and focus groups, to ensure a more balanced and accurate understanding of the public’s views. This hybrid approach can help prevent the pitfalls of AI bias and provide a more comprehensive foundation for policy decisions. That ship has sailed. In the age of instant gratification and results, campaigns prefer efficiency over efficacy because if they couple that with “echo chamber” AI tools and other AI tools to mold public perception or access to information, it is really not necessary to really be concerned with what their constituents think. This is the reality of today’s campaigns. We see that every time there is a Congressional hearing – a pony show to appease their base without results.

GOVERNMENT “DECISIONS” Powered by AI

AI’s impact on improved decision-making has been profound. It enables politicians and policymakers to make more informed decisions by analyzing vast amounts of data. This capability helps minimize risks and enhance decision quality by providing insights into trends, patterns, and potential outcomes. For instance, AI systems can process and interpret complex datasets from various sources, such as economic indicators, public health statistics, and social media sentiment, to guide policy decisions and anticipate future challenges.

The Center for Data Innovation highlights the significant advantages of AI in policy-making, noting that data-driven decisions can lead to more efficient resource allocation, better-targeted interventions, and overall improved governance. Additionally, using AI in predictive analytics allows for proactive measures, hence being coined time travel. AI can identify potential public health crises before they escalate or optimize traffic management systems to reduce congestion. AI can also manufacture such scenarios, taking control of all aspects of policy, laws, information access, discourse, and perception of such situations.

EFFICIENCY vs EFFICACY

Despite the apparent benefits of efficiency, there are valid concerns about the limitations of relying solely on data for decision-making. This is efficiency over efficacy. One crucial issue is the potential to overlook the human element, which is essential for effective governance. While data can provide a comprehensive picture of trends and patterns, it cannot capture the nuanced, context-specific insights from human experience and judgment, underscoring the need for a balanced approach.

POLITICAL DISCOURSE

This topic requires a good analysis but a simpler one than this for one to understand how DISCOURSE is manipulated. I had written articles years ago referencing this, some are cited below.

RELATED: Meet Google’s Deep State Policy Manipulator

REALTED: CASE LAW: Big Tech Companies Are Government Contractors, So Suppressing Free Speech Is Illegal

RELATED: This is Orwellian Warfare

AI is being used in propaganda that was actually legalized under the Obama Administration upon his exit. AI’s ability to micro-target individuals with tailored messages makes it a powerful tool for influencing public opinion. For instance, AI-driven propaganda can sway voters during election cycles by bombarding them with highly targeted ads and content that resonate with their specific concerns and biases.

This strategic targeting can manipulate perceptions and decisions, often without individuals being aware of the influence exerted upon them. The Atlantic reports on how AI-driven propaganda has been used to influence political outcomes and public sentiment, raising ethical questions about the manipulation of democratic processes.

Humans are now assisting such AI propaganda posturing as the “cool lunch table” and excluding anyone who is antipathetic to their goals from the conversation. Most people coin this a psychological operation but can’t conceive the notion that AI controls humans, assisting them through reward or the AI’s “programmers” convincing them it is the only way.

In summary, AI adoption in government operations has already happened and while it has provided significant efficiencies it has also enhanced their power and influence and omitts the people it is supposed to serve. The best way to combat such a political landscape is to READ (people do not read anymore), ask questions and most of all, TRUST YOUR GUT.

Now ponder on how this effects voting….

Like my work, you can tip me or support me via TIP ME or HERE or support me on Subscribestar! Follow me on Rumble and Locals or click below to subscribe through my site. I am 100% people-funded.

Kelly

I appreciate all that you have written. Thank you for yet another shareable article I hope my people read.

I have a question. Do different gov’t agencies use disconnected AIs or are they created to be specific to the desired skills/tasks?

Is the magic wheel stacking juries able to communicate with whichever one is running our elections at DHS?